AndPlus acquired by expert technology adviser and managed service provider, Ensono. Read the full announcement

AndPlus acquired by expert technology adviser and managed service provider, Ensono. Read the full announcement

Computer vision is... pretty much exactly what it sounds like. It's the science of giving your hardware the ability to 'see' via a camera input. The implementation of machine vision is still under heavy research and we're passionate about being part of the advancement of the field. As this technology grows, the computational power required to execute the software grows with it. With Google and Apple introducing machine learning frameworks designed for mobile devices (Tensorflow Lite and Core ML), we're taking the initiative to incorporate them in our research and development.

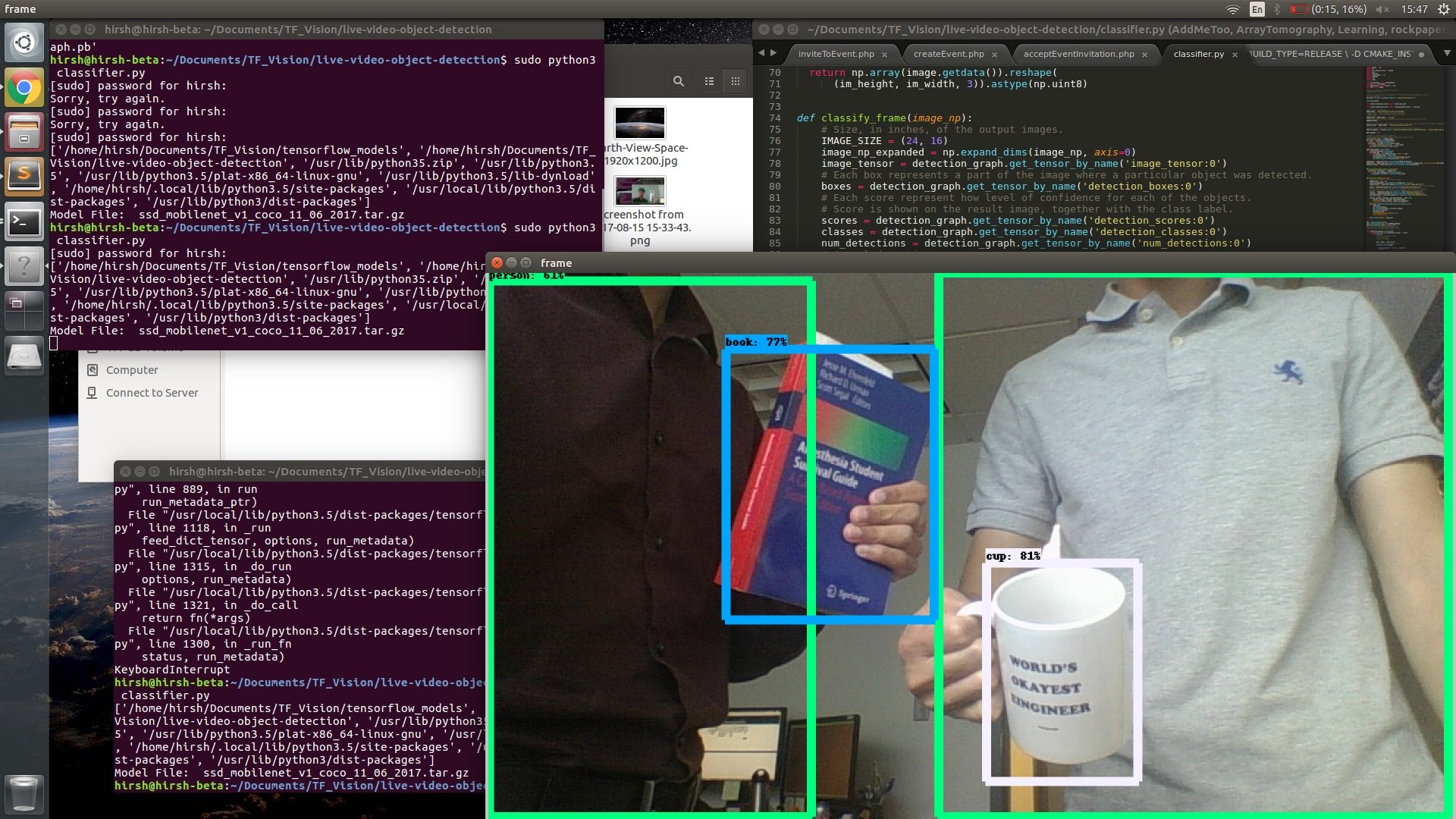

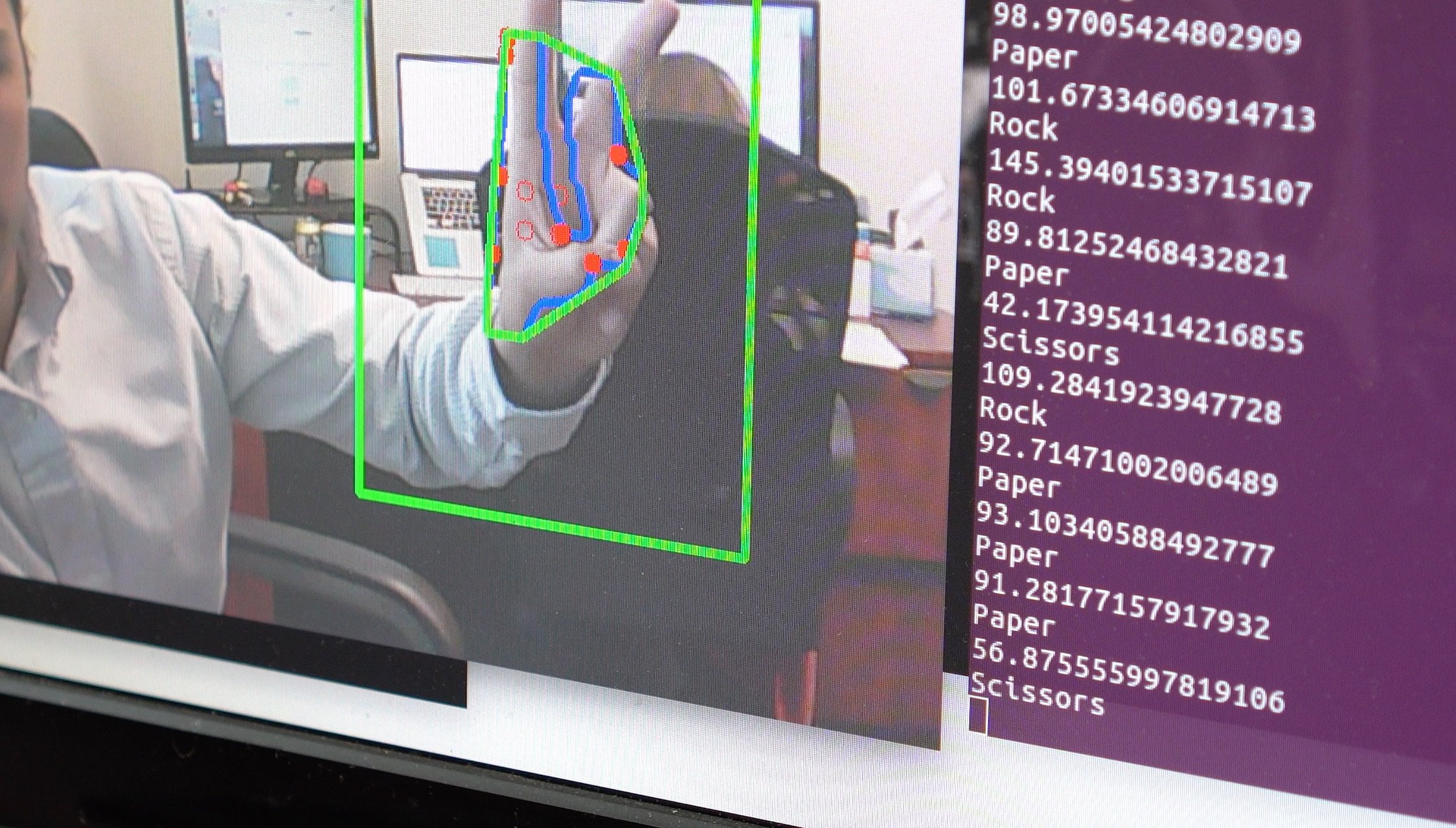

The apps we built started off as desktop based and gradually moved onto mobile platforms. Our first foray into the world of computer vision was the Rock, Paper, Scissor (RPS) project. This project was designed with OpenCV as the main driver. The application was designed with three primary methods of 'playing' the user back.

The conditional probability method was designed to use a global data set of the most commonly played hands and used a predictive model to guess the next hand. The application would collect the data and get better over time as the sample size grew. The machine learning method was designed to play several hands against the user and learn their individual tendencies and act upon them. Similar to the conditional probability method but using a machine learning library to teach itself what the user will play next. The final method is what we called the "cheating method" because it saw what the user intended to play and beat them to the punch with its computational power.

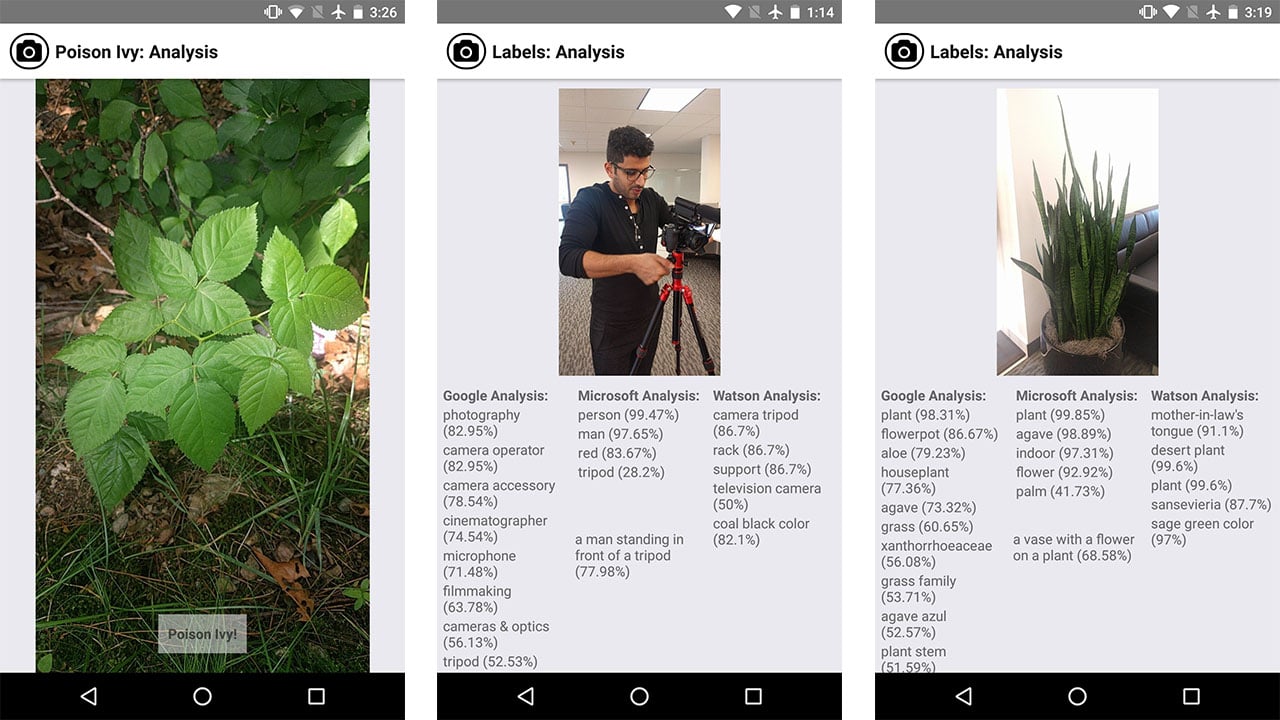

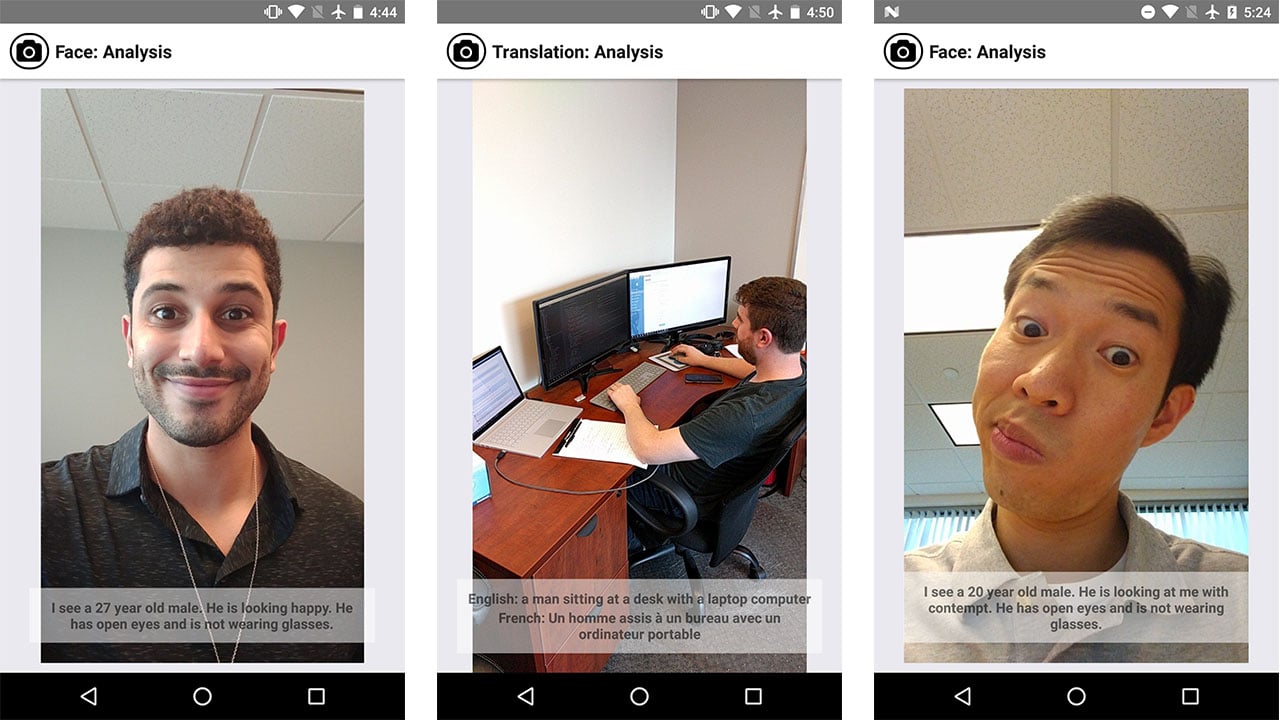

We developed a bundled mobile app that takes advantage of a number of machine vision technologies. We utilized complex algorithms from tech giants such as Google Vision, IBM Watson and Microsoft to identify individual photos. The team later expanded it to recognize and translate entire scenes. Placing all three algorithms side-by-side provided valuable insight to where each company was approaching the problem from. We learned that Watson is more oriented to nature and medicine while google was better at individual random objects. Microsoft was the most impressive in that it could recognize an entire scene.

One practical application we thought of was to utilize IBM Watson's algorithm to recognize poison ivy (we have an abundance of it behind the office so it was easy to test). The Watson API was exceptional at identifying specific plants and proved to be a valuable tool for creating this app.

The final application we implemented in this bundle was scene translation. We started by utilizing both Microsoft's vision API since our findings concluded that it had (by far) the most efficient and accurate scene recognition. We packaged in Google's translation technology and were able to recognize a scene and translate it into a language of choice within seconds. We created this application because we felt there was a practical use case for real time translation. The ability to mesh two different algorithms together proved infinitely useful for us.

Research and development in computer vision is far from over. Computational power is increasing by the day and datasets are growing ever larger. We're proud to be leading the charge in our industry in creating new and exciting software based off of these technologies. This allows of to provide our clients with best-in-class services in the most cutting edge tech. Keep up with our machine vision musings on our blog.

AndPlus understands the communication between building level devices and mobile devices and this experience allowed them to concentrate more on the UI functions of the project. They have built a custom BACnet MS/TP communication stack for our products and are looking at branching to other communication protocols to meet our market needs. AndPlus continues to drive our product management to excellence, often suggesting more meaningful approaches to complete a task, and offering feedback on UI and Human Interface based on their knowledge from past projects.

or If you don't like forms, email us info@andplus.com

Read the AndPlus ratings and client references on Clutch - the leading data-driven, B2B research, ratings, and reviews firm.

257 Turnpike Road, Southborough, MA

508.425.7533

257 Turnpike Road, Southborough, MA

508.425.7533