Cloud-native applications can give organizations seemingly contradictory results: huge networks that are lightning-fast, open communication with excellent security, tight integration with high adaptability. It’s made possible by a different approach to the whole idea of what an application is and how to build one. In this post, we’ll talk about how that works, what organizations should look out for and what the benefits of cloud-native applications are. But first, when we say ‘cloud-native,’ what exactly does that mean?

What is ‘cloud-native’?

Fully leveraging the advantages of the cloud requires approaching software, hardware, architecture and functionality differently. Cloud-native is the approach appropriate to operating successfully in the cloud.

The Cloud Native Computing Foundation explains it like this: ‘Cloud-native computing uses an open-source software stack to deploy applications as microservices, packaging each part into its own container and dynamically orchestrating those containers to optimize resource utilization.’ ‘“Cloud-native,”’ says Scott Carey, ‘is about how applications are created and deployed, not where.’

As an example, imagine you’re talking about being a ‘digital-native’ organization. Rather than print out MS Word documents, you might share Office 365 links or Google Docs; you might have a BYOD policy and a paperless office. The point is that you don’t just move the paperclips-and-box-files office onto computers. You can’t exactly do that, and it’s not the best use of digital tools anyway. Instead, at some point, you segue over from ‘digitized office’ to ‘digital office.’ In computing, Liron Golan refers to ‘the journey from Virtualized Network Function (VNF) to Containerized Network Function (CNF).’

That’s the difference between moving some parts of an operation (or even all parts) onto the cloud and being cloud-native. Crucially, it requires thinking about architecture differently, in a way that’s uniquely suitable for IoT installations.

Cloud-native architecture and IoT

Let’s look again at the description the CNCF gave. Cloud-native architecture builds your operation in the ‘shape’ of the cloud. Rather than virtualize traditional desktop computing, you put everything inside containers. That lets — in a way, forces — you to create different types of applications and to view and structure networks differently. In particular, it means you have to approach development and deployment through the lens of containers.

Cloud-native applications are modularized, broken up into components that run separately inside their own containers. So they need to be developed that way too, rather than develop applications as monoliths. This gives your applications similar advantages to those conferred on industry by interchangeable parts: when something goes wrong, you can just slot another component in and carry on.

Using cloud services like EC2, S3, Lambda from AWS, and others, developers can now create applications that are inherently more reliable, scalable, secure and effective than ever before. Those qualities — and others, as we’ll see — are baked into the architecture, not added afterward by extra effort.

All of this will matter immensely to anyone creating applications in the future. Of teams using cloud-native development, 78% report lower operations costs, 76% say they experience better security, and 77% say they’re able to drive more rapid digital business growth.

Nor are businesses and the developer community unaware of these advantages. Presently, 95% of new applications are cloud-native and the number of back-end developers using popular containerization tool Kubernetes has risen to 31% — a 67% increase over the previous year.

However, it matters particularly to IoT, because cloud-native is the perfect match for IoT and edge computing. It allows ‘units of application’ to be moved around inside networks to where they’re needed, to be spun up or down as needed, and to be maintained and administered as independent components. Cloud native-enabled gateways facilitate real-time services at the edge and at scale, and add value through machine learning and artificial intelligence. In short, it’s ‘the only realistic way to handle the load created by billions of devices,’ says Györgyi Krisztyián, allowing developers and organizations to ‘ overcome the limitations of traditional connectivity models, which are inflexible and expensive for IoT, prolonging time-to-market and time-to-revenue.’

How cloud-native IoT works

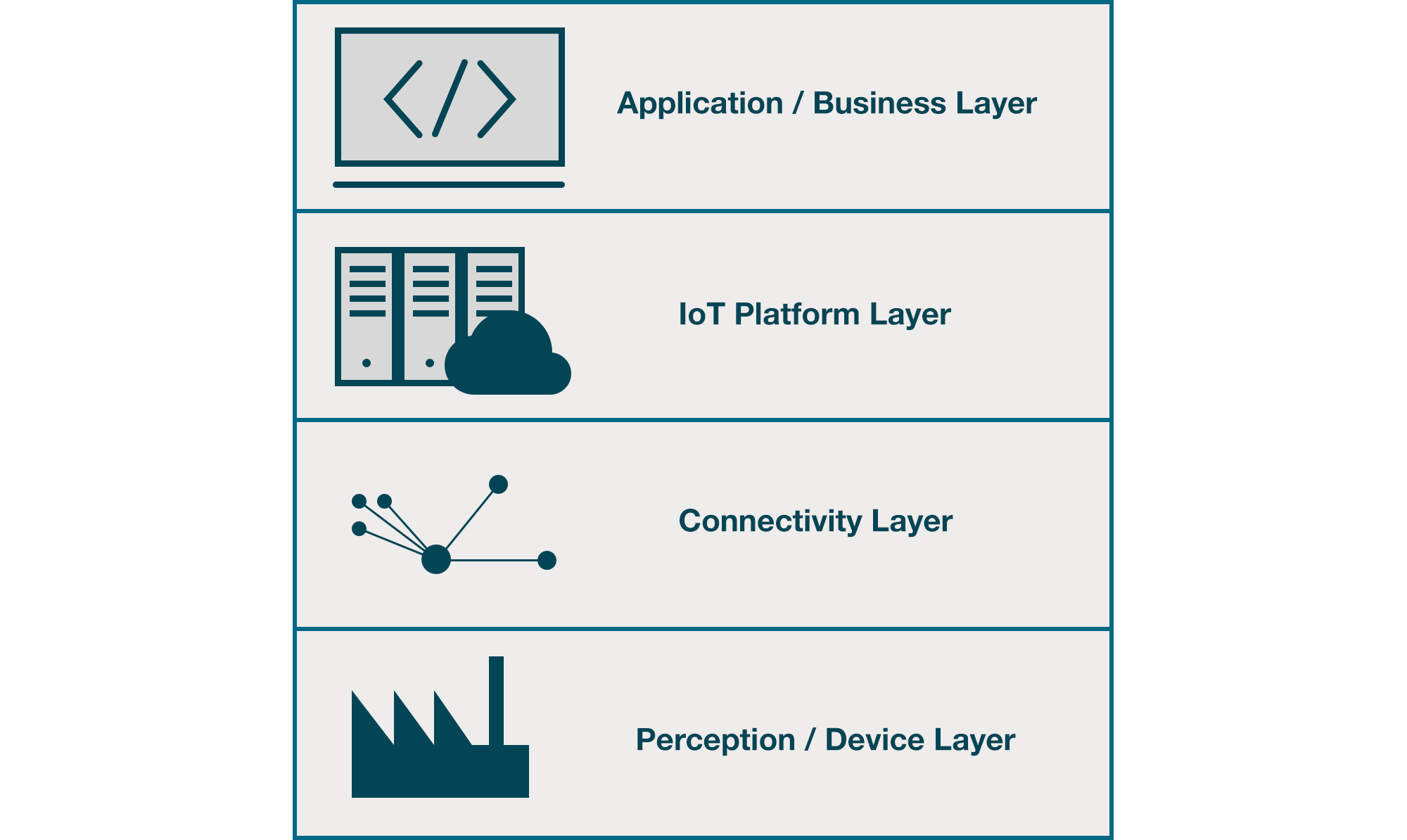

IoT networks can be modeled in several different ways. For the purposes of this post we’ll use a very simple four-layer structure:

- Perception layer is the IoT device network with its sensors.

- Connectivity layer is the Wi-Fi, 4G or 5G, Bluetooth or other communication protocol(s).

- IoT platform layer is the ‘middleware’ of IoT, processing, distributing and storing the data coming from the transport layer.

- Application layer is where the applications live that control the whole network and manage its data.

The jobs that a specific IoT solution needs to get done should be the guide that determines the choice of IoT platform and other suitable technologies.

Elements of cloud-native IoT architecture

Cloud-native applications usually have the following things in common:

They’re containerized

Containerization using tools like Kubernetes is key to achieving the benefits of cloud-native IoT. Containers are a packaging format for applications and their runtime environments; unlike virtual machines, containers share the kernel of the operating system they’re installed on. They run in complete isolation from each other, so they provide full, modular virtualization at the application level. They’re lightweight, quick to start, easy to scale, and port well to other environments.

They’re architected on microservices

Microservices are independent components or modules that can be executed, scaled, and managed as independent services. However, together they provide the same services as a monolithic application, communicating with each other via well-defined interfaces and APIs. They’re often developed by a dedicated agile team based on a technical domain or function, and Domain-Driven Design is a common choice.

They use DevOps and continuous delivery

DevOps combines two roles which are traditionally isolated, development and operations. It’s necessary because fully leveraging cloud-native IoT requires not merely restructuring applications: the development process, team, and ultimately the organization must also be reoriented. Continuous delivery, involving iterative cycles of updates and improvements, allowing for more adaptability as well as faster responses to bugs and problems.

Benefits of cloud-native IoT architecture

Horizontal scalability

Cloud-native IoT can add containers, gateways and devices very quickly. Typically, such solutions follow preset administrator policies to automatically scale up and down their computing and storage capacities in response to sudden changes in IoT data volumes. These two factors, network size and data volume, aren’t 1:1 related — a warehouse might add no new devices but see a surge in data at Christmas when order volume soars, for instance. Cloud-native IoT’s capacity to be responsive to each while decoupling them is a major competitive advantage.

High throughput

IoT installations have to handle large volumes of data without degrading system performance. IoT traffic is so-called ‘bursty’ traffic, and data sources can be highly synchronized. Even though each individual IoT device might be low-powered compared to a desktop computer, there can be a large number of gateways — and the resource and application logs are concomitantly large. They must be transferred, correlated and analyzed to detect network issues. In addition, the volume of application data can be relatively high even if sensor data volume is low. Cloud-native tools like Apache Kafka use massively parallel processing, processing high-throughput datasets without slowing down. Cloud-native architecture also permits moving computing closer to the edge and thus reducing the quantity of data that must be transferred and processed in parallel.

Reduced latency

For some applications, latency isn’t much of an issue. For use cases like self-driving vehicles or industrial automation, it’s mission-critical. Edge computing drives down latency by moving computation, sensing and action closer together. However, this must be matched by system architecture that can support low latency. There is no single mechanism to achieve this: developers and system architects need to match the requirements and traffic patterns of each individual application. For example, MapReduce can be used to move computing closer to sensors ('edge computing') and to leverage the power of in-memory databases that accelerate processing.

No single point of failure

Microservices running in containers can be architected in such a way that there is no single point of failure throughout the network. No one component can fail and disable or seriously compromise the entire installation. Container orchestration systems like Kubernetes or Docker Swarm let developers create robust, modular systems — Kubernetes in particular permits IoT platform monitoring and failure recovery, independently from the cloud infrastructure. Thus, robustness moves onto the cloud itself: even a hardware or software failure won’t significantly affect overall operations.

Security

Cloud-native, with its emphasis on containerization, addresses one of the biggest challenges IoT installations face: managing security across a network that offers unprecedented attack surface. Misconfigurations or known vulnerabilities affected over half of respondents to a recent survey by Snyk, indicating that there’s still room for improvement. Many leaders see the security potential in cloud-native IoT — and even where they’re wary, 64% cite better security across their company and its customer data as a benefit of adoption. Modular system architecture allows developers to build more securely from the start rather than seek to strengthen or reinforce centralized systems.

How AndPlus can help

As we’ve seen, there’s significant interest in the benefits and advantages of cloud-native IoT. However, there are also some headwinds. Many organizations — 64% of those questioned by IBM last year — identify that they have insufficient internal expertise with cloud platform technologies; 51% said they would have difficulty identifying which applications would benefit from being rebuilt as cloud-native, and 48% expressed uncertainty about the time and costs involved in building cloud-native applications.

AndPlus has extensive experience building cloud-native applications for organizations in multiple verticals, building in partnership with clients to create, tailor or improve existing applications to harness the power of cloud-native technology.

Takeaways

- Cloud-native is a natural companion for edge computing and IoT.

- Cloud-native applications are built and deployed as microservices operating inside containers, which communicate but are separate.

- Because of this, cloud-native architecture can be modular secure, scalable, and fast.

- Most organizations face uncertainty on skills, price, timescale and prioritization when considering cloud-native application development, and would benefit from working with a trusted development partner.