For companies getting into the IoT space, the cost of software development can be both an obstacle and an unknown. Conventional application development isn’t a great guide, and the variety of IoT implementations means it can be difficult to estimate from limited experience.

In this post, we’ll look at what IoT software development actually costs, which factors affect costs, and how to keep project costs down. We’ll start by considering IoT software development costs in the context of IoT as a whole.

IoT software development costs as a proportion of IoT costs

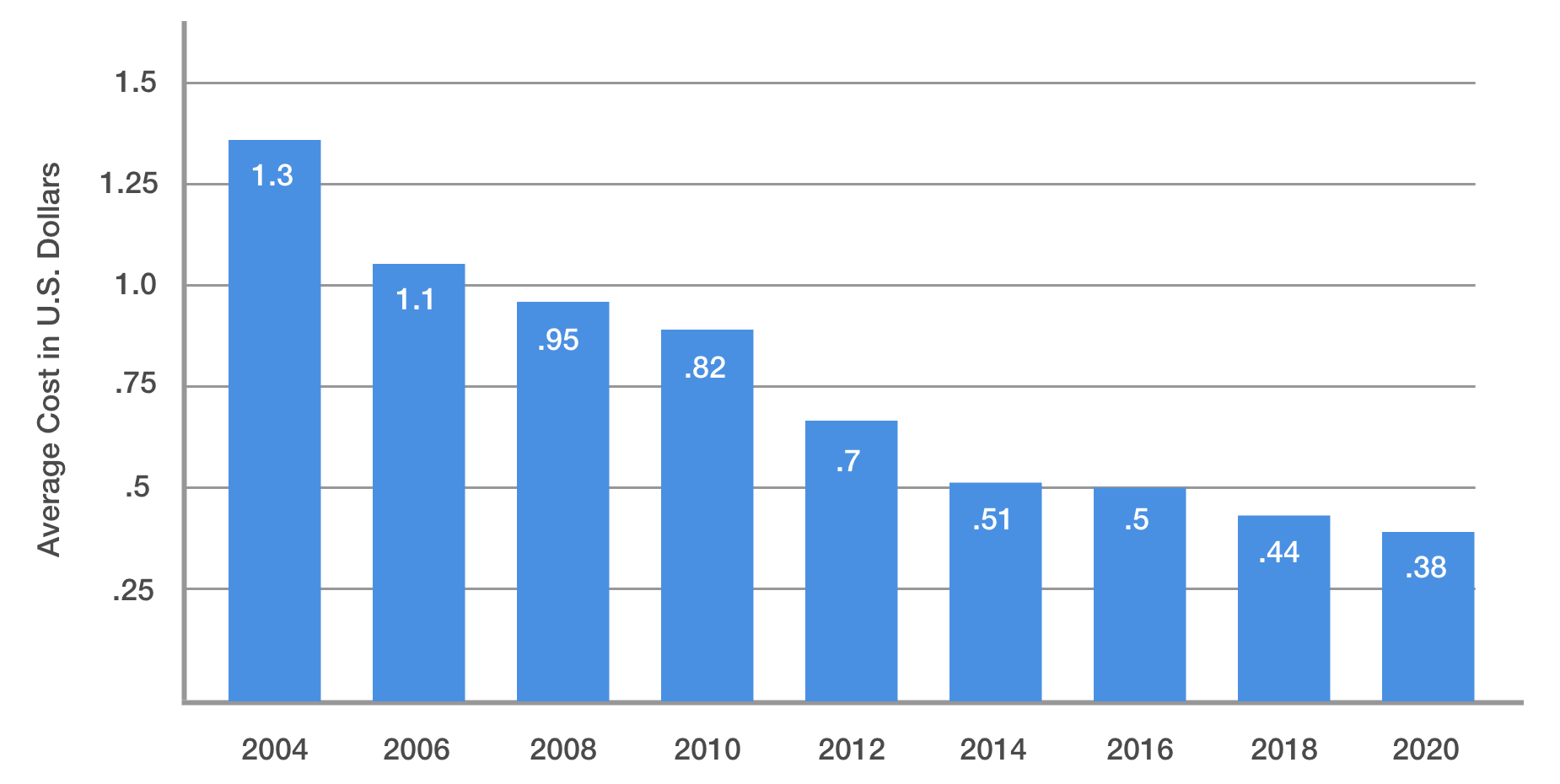

The overall costs of constructing an IoT installation are falling. But the fall isn’t easily distributed. Unsurprisingly, it’s focused on those areas of IoT that are susceptible to commodification, or those that are susceptible to the cost reductions associated with very easy replication. For example, the costs associated with IoT devices are falling. In 2004, the average price of an IoT sensor was $1.30; in 2018, it was 44¢. Low-energy Bluetooth tags can now be bought for less than this, and ‘Next year, we'll get [them for] less than 10 cents,’ said Wiliot’s SVP of Marketing Steve Statler in April 2022.

Average cost of IoT Devices in U.S. Dollars (Source)

Similar effects are in play for cloud computing access, which is usually offered through large vendors on an Infrastructure-as-a-Service basis; access to this starts at pennies too. Connectivity prices are falling and will soon see a sharp drop as the vastly more capable 5G becomes widely available.

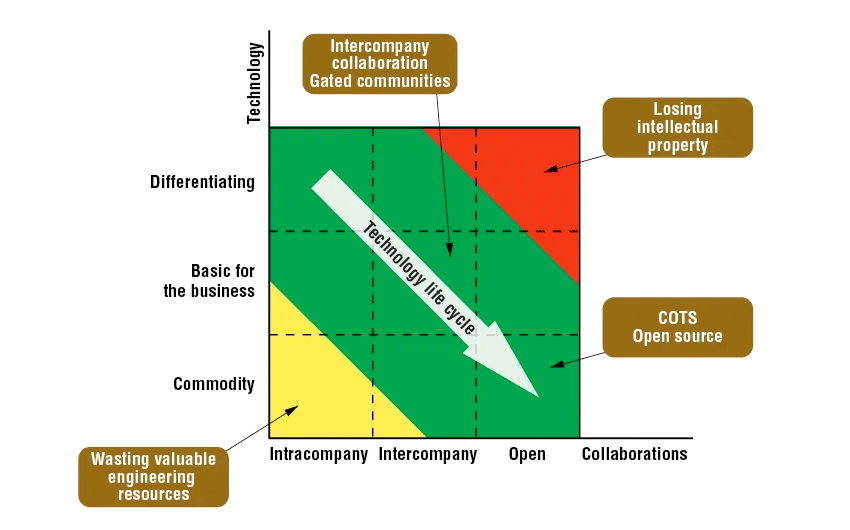

In all these cases, the same pattern is observed — one that’s familiar to the history of any business. First, a technology is pioneering and has to be built by hand; then it’s specialist and has to be built by highly-capable small firms; then it’s common; then it’s ubiquitous.

Computing followed this pattern, and now, so has connectivity and sensor technology. But applications aren’t susceptible to this process in the same way. Software costs are the only factor in IoT that aren’t falling; they’re getting more varied. The cheapest IoT apps now cost less than they did even two years ago; the most expensive cost more. That’s because basic functionality can now be copied and re-used. But apps to control complex, high-functioning networks in an efficient and secure manner — especially if they’re required to integrate with other applications — now offer more features, more functionality, and more ROI.

Anatomy of IoT software development costs — and how to reduce them

The software required to support an IoT installation consists of several layers, including:

- Embedded and edge computing software, now often supplied as part of an infrastructure package.

- Middleware server-side software like runtime environments. This might be a containerized system like Docker if your IoT implementation is cloud-native, or a more traditional virtualization solution.

- The application services that ingest, process, store, integrate and analyze device data, display them to users, and permit user control.

We’ll look at all these factors as we lay out how costs are apportioned in IoT app development. However, it’s important to remember that these aren’t separate items, but components of a whole. Change the OS you use and you change the infrastructure options available; each part has to make sense in terms of all the others.

Back end and infrastructure costs

These are the costs you incur when you need back-end processing to handle data. Infrastructure costs aren’t an application development cost per se, but they speak to application development costs because of how they affect which services your application will need to provide, how much control you have over your infrastructure, and the relationships between the infrastructure, device, communication, and application layers.

There are two basic options: build a web server from scratch, or use an existing cloud platform. The cloud platforms that are best for IoT are often frameworks, including:

- Hardware devices such as sensors, controllers, micro-controllers, and other hardware elements

- Software applications that configure controllers and operate them remotely

- Communications systems and options like Bluetooth Low Energy or RFID

- Applications layer, running over devices and networks to deliver results

(Read more about IoT frameworks here.)

Some frameworks are open-source, while others are proprietary offerings from major companies like Amazon, Google, and Microsoft. While they differ, they all offer a solution that lets you build an application, plug it into their services, and access the benefits of IoT.

How can you reduce infrastructure costs?

If you’re building an application for a relatively small network, look to Google and Microsoft. Google is slightly cheaper, but Microsoft comes with integration with the rest of the widely-used Windows ecosystem and a choice of two types of OS for IoT.

However, if you’re building an application for a large, complex network, or one with specialized functions, Amazon Web Services may be a better choice. Many high-end data analysis functions are available through AWS and can simply be ‘plugged in’ to your project as needed, often for significantly less than it would cost to reinvent the wheel yourself. Among a range of solutions for industries including IoT, Amazon Web Services offers monitoring, connectivity and much more through partners and its own machine learning, AI and data management tools.

Finally, there’s a trade-off between the additional work hours required to build applications on many open-source solutions, and the additional costs that come with proprietary tools. Ultimately, the question of how to reduce IoT infrastructure costs doesn’t have a clear answer that will work for everyone. Instead, it’s a question of selecting the right tool based on an understanding of the business and technical requirements of your project.

Application functionality

What do you need your application to be able to do? This is the first place where you can intervene directly in the app development process and reduce costs, and it comes before you write a single line of code or deploy a single sensor. That’s because it’s really a business decision, consisting of two questions:

- What do we want?

- What’s the simplest way to get it?

The best practice for application development in IoT is the same as for other spaces. Functionality should be kept to a minimum, and core features should be heavily emphasized. Development time is costly, so it’s important to focus on the functionality that will actually deliver profitability. Discussions should focus not on what to build but on what you can get away with leaving out.

However, there are specific points at which IoT application planning and development differ from best practices in app development generally. These are determined by the app’s interaction with the IoT network and infrastructure layer. The average mobile application doesn’t field data from a thousand sensors.

How can you reduce application functionality costs?

The three places you can reduce costs here are work hours, costs from other components like sensors and infrastructure, and costs from overrun and failure. Work hours and risk can be handled by choosing the right development method: continuous delivery from a team with experience with IoT application development reduces the chance that you get halfway through the process and realize other features are needed. It also increases the likelihood that bugs are ironed out before full production. Infrastructure costs can be dealt with by choosing the most appropriate infrastructure for your application’s required functionality.

Operating system choice

Different IoT operating systems offer different functionality. They might integrate differently with other network components, or make sense in terms of platform or application feature choices. Most IoT networks rely on Linux for their devices, but this doesn’t always mean their applications should also be built to run on the Linux OS. (To learn more about IoT device OS options, read this post.) We often select Android as the most appropriate OS for user-facing applications, as we did with this precision airflow control system for use in critical room environments.

How choosing the right operating system can help reduce application development costs

Selecting the right OS can let you develop an application with the features you need, using less work time and with better integration with the rest of your IoT installation. Look for operating systems that allow you to build the functionality you want with as little effort as possible, which usually means one with its own development language or that permits development in OS-agnostic languages like Java and Python. Again, this is a question of making sure all the puzzle pieces fit together, so choices have to be made coherently.

UX and UI

What path will users take through your application, and what user interface elements need to be in place to facilitate that path? Again, you should always seek to simplify, but some functionality simply requires a more complex application. Typically, UX design will lead the application development process, informed by market research and user testing; a full-stack prototype application will be available quite early in the process.

Because IoT applications are more complex in scope than most applications, this can actually extend the development process. Where possible, it’s better to keep feedback cycles as short as possible. We’re proud to have pioneered applying agile development principles to UX development, relying on lots of user feedback and short iteration cycles to dial in an experience that facilitates what users want from the application.

One way to do this is to model functionality and UX separately. When we developed our application for NexRev, an aftermarket HVAC provider, we created an MVP (Minimum Viable Product) early on in the development process. Reliant on manual data entry, this MVP let us validate the app’s functionality; then we were able to build UX to facilitate it.

How to save UX and UI costs

Try not to reinvent the wheel: there’s a large body of standardized design choices, from the Z pattern to the hamburger menu, that are used because they work. Deviate from them only if there’s a good reason to. Try to correlate UI, UX and functionality as closely as possible: it looks like this so the user can do this, so the app can do this.

Security requirements

IoT installations have more complex security requirements than standard web or mobile applications. With endpoints numbering into the hundreds or thousands, they’re uniquely vulnerable. And when they’re deeply involved in key business operations, they’re doubly irresistible targets for hackers. Many attacks take place at the device or network level but web and mobile applications also make tempting targets. (Read more about IoT security here.)

Reducing costs associated with IoT application security requirements

Security requirements should be identified in advance. Doing this accurately is key to reducing costs, because failure to do so can result in major losses if security is breached. IoT applications should be built using up-to-date security standards and protocols, including those suggested by the National Institute of Standards and Technology as well as the IoT Cybersecurity Improvement Act. These are good places to start from, but security for the application layer should be a constant concern, considered at every stage of the development process, owned by everyone involved, and tailored to the needs of the specific business and installation.

How AndPlus can help

IoT application development involves building an application to control a unique digital structure. Final costs are hard to predict. The only way to reliably keep them down is to approach the task with the requisite experience and range of skills to both plan and execute development in the most efficient manner possible.

AndPlus has helped pioneer the process of effective and efficient IoT application development. We’ve worked with a varied client base to create specialized industrial installations that were secure and powerful. At the same time, we’ve taken the principles of agile development and made them relevant to the specific requirements of IoT development.

We’re keen proponents of building two- to three-year roadmaps that let us design not just solutions, but ways to reach them. Along the way, we’ll help your organization, not just your software, meet the challenges of IoT adoption.

Takeaways

- IoT application development is more complex and demanding than traditional web and mobile development. However, many of the same principles and best practices still apply.

- Each factor in the cost of an IoT application is dependent on the others. Cost management needs to be strategic and depends on having a development team with the requisite skills.

- Companies planning an IoT application should seek partnerships with experienced developers who can make the right decisions about components, functionality, and development practices in concert with them.