One of the biggest mistakes a business can make is to assume today’s success will be there tomorrow. A company can thrive for generations, relying on a tried-and-true formula for success. It’s easy to fall into the trap of believing that what has always worked in the past will always continue to work in the future.

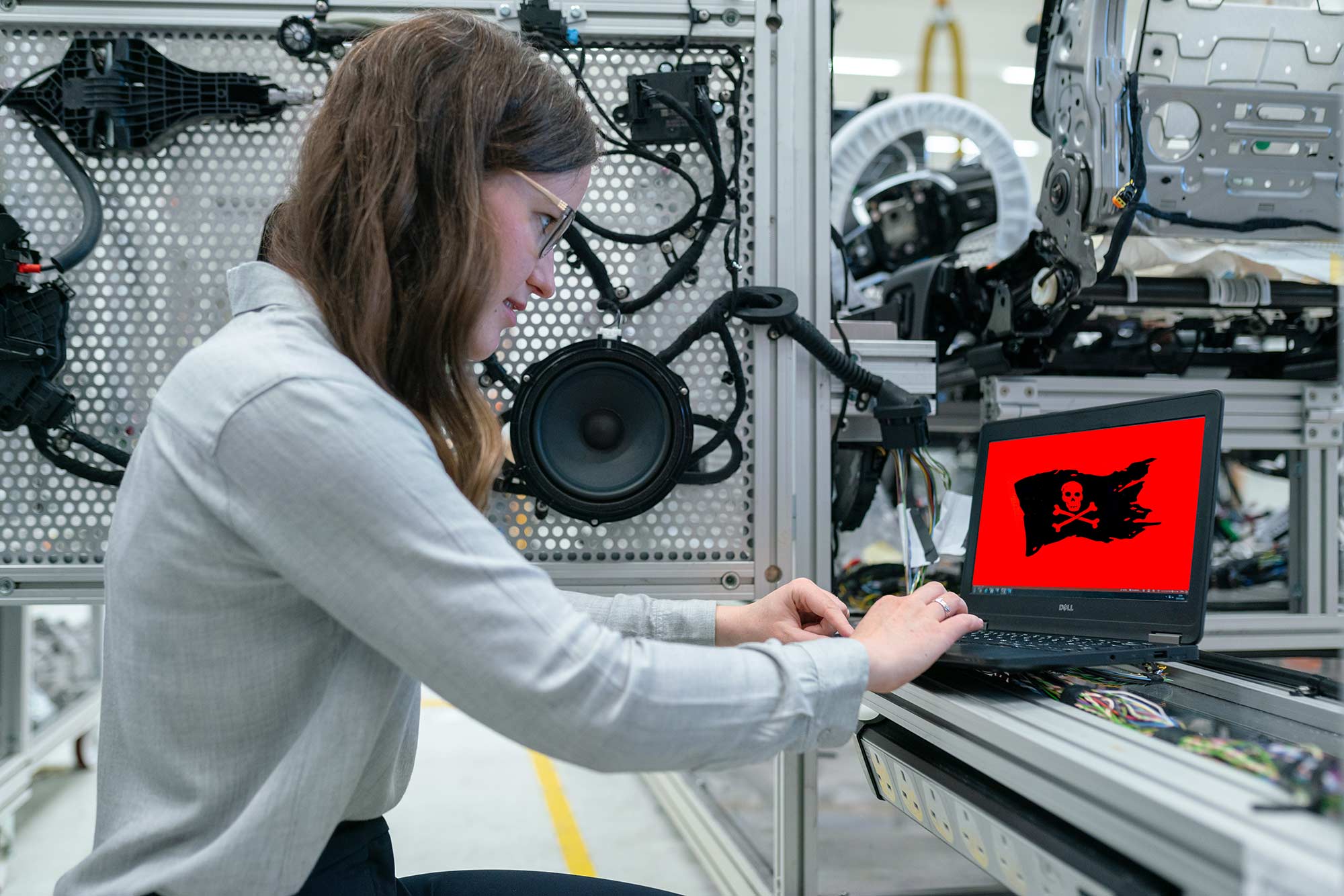

All it takes is one disruptive technology, one upstart company that comes out of nowhere with a better, faster, cheaper, more convenient way to deliver the same products and services, to completely upend an established company’s entire business model.

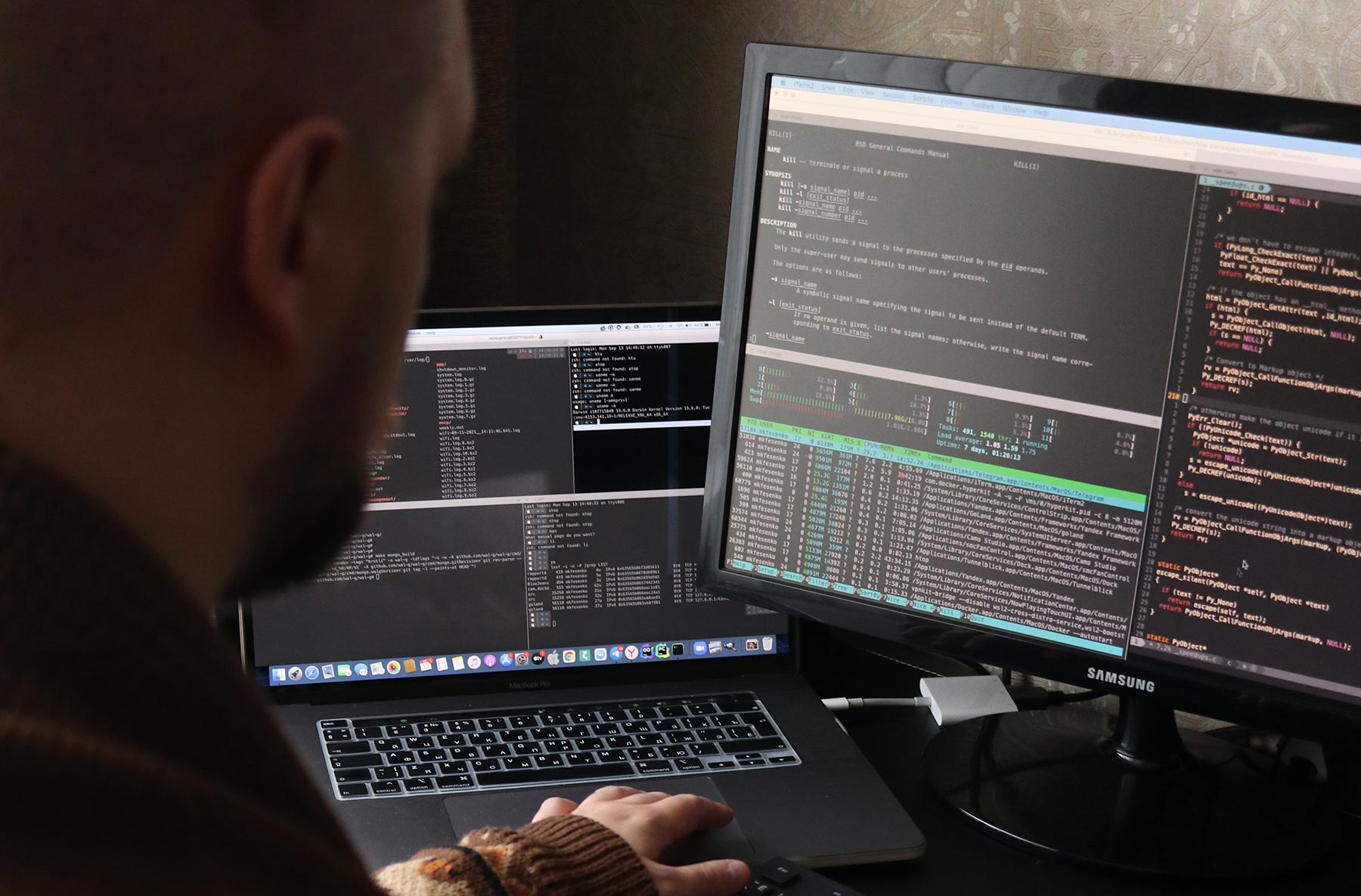

No one can predict the future, so it’s inherently difficult to anticipate these developments. But there are things businesses can do to put themselves in a better position to respond to these developments when they occur. The most important of these is digital transformation.

Digital Transformation: A Review

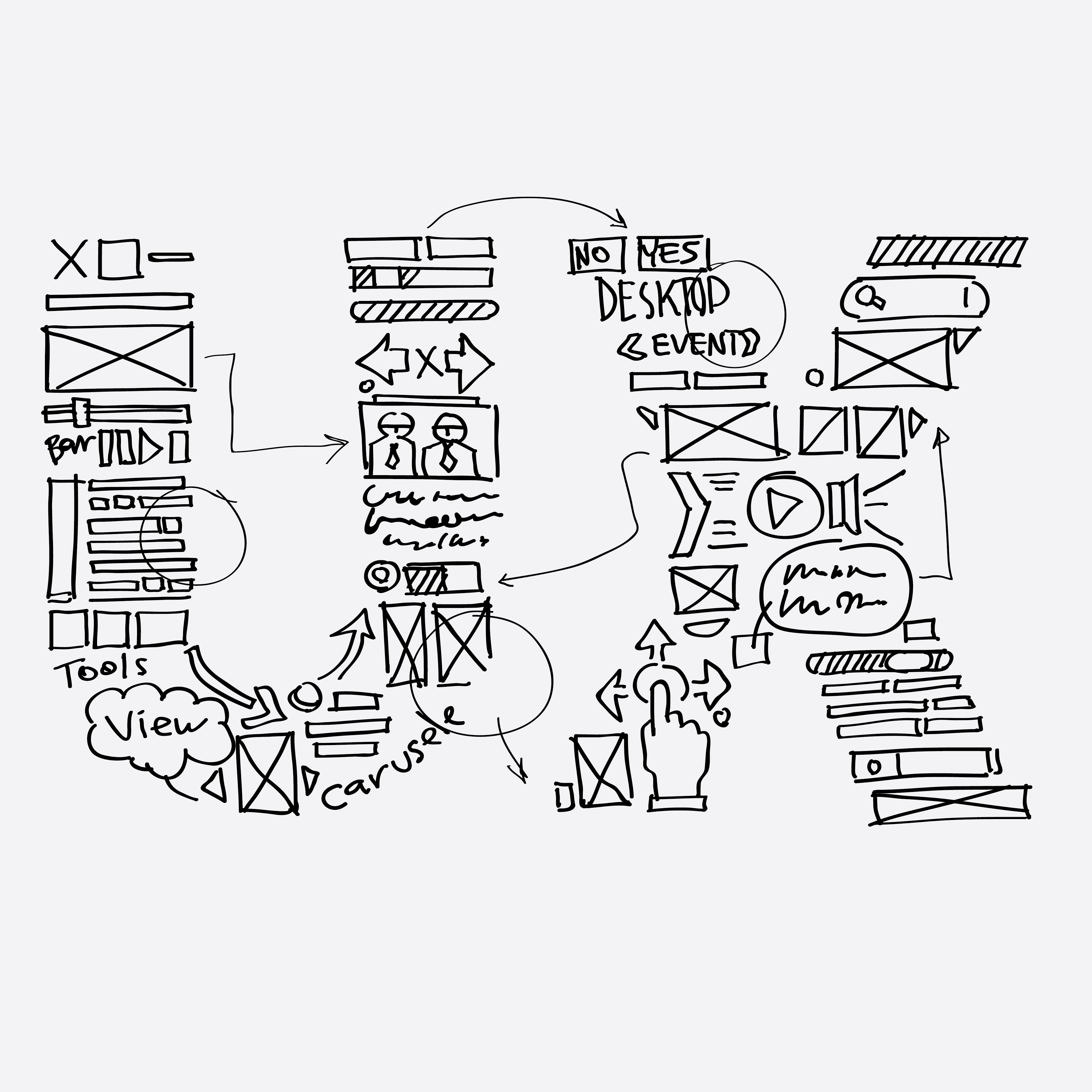

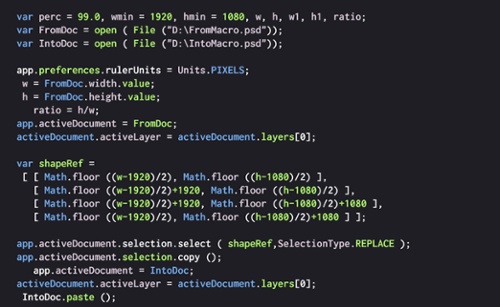

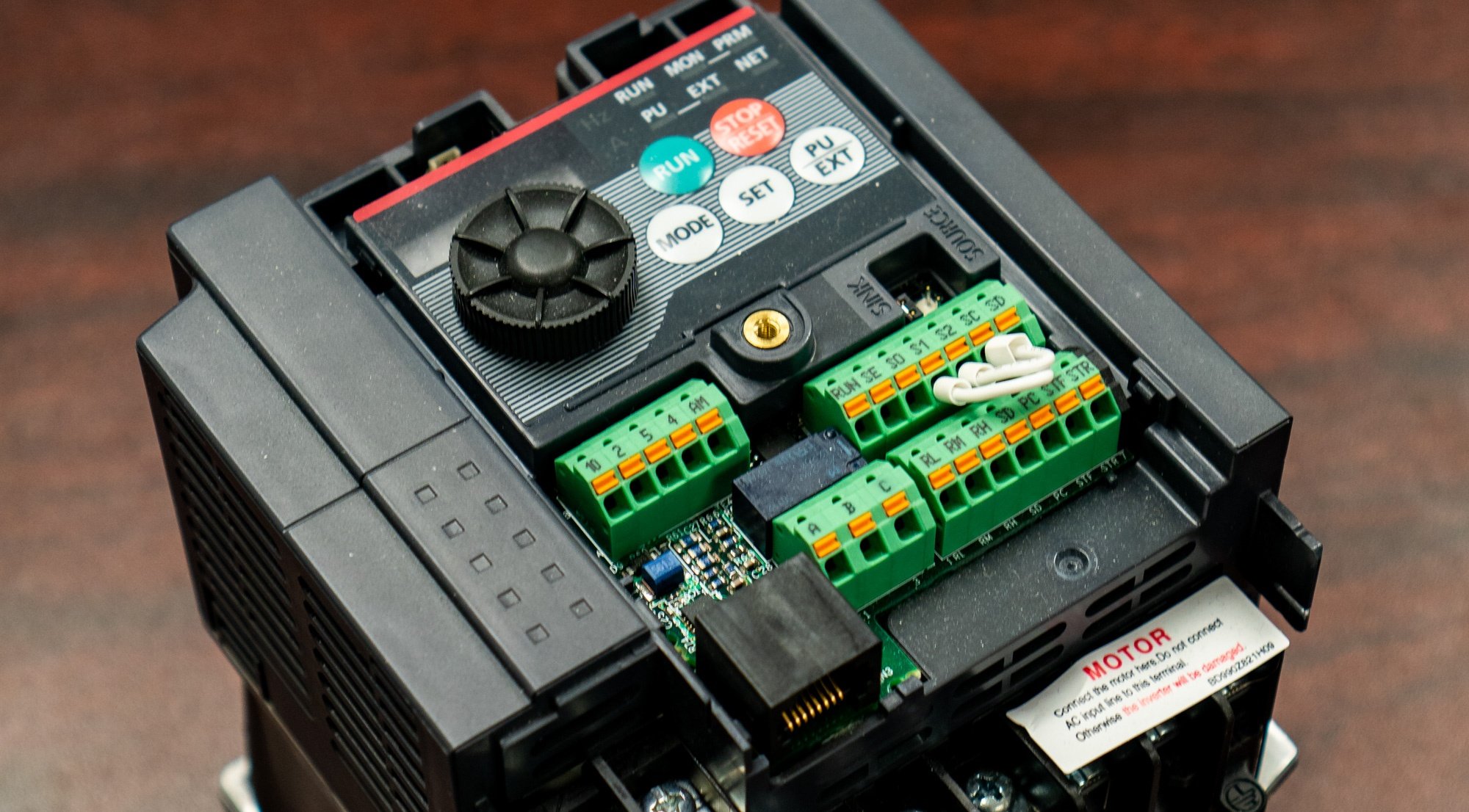

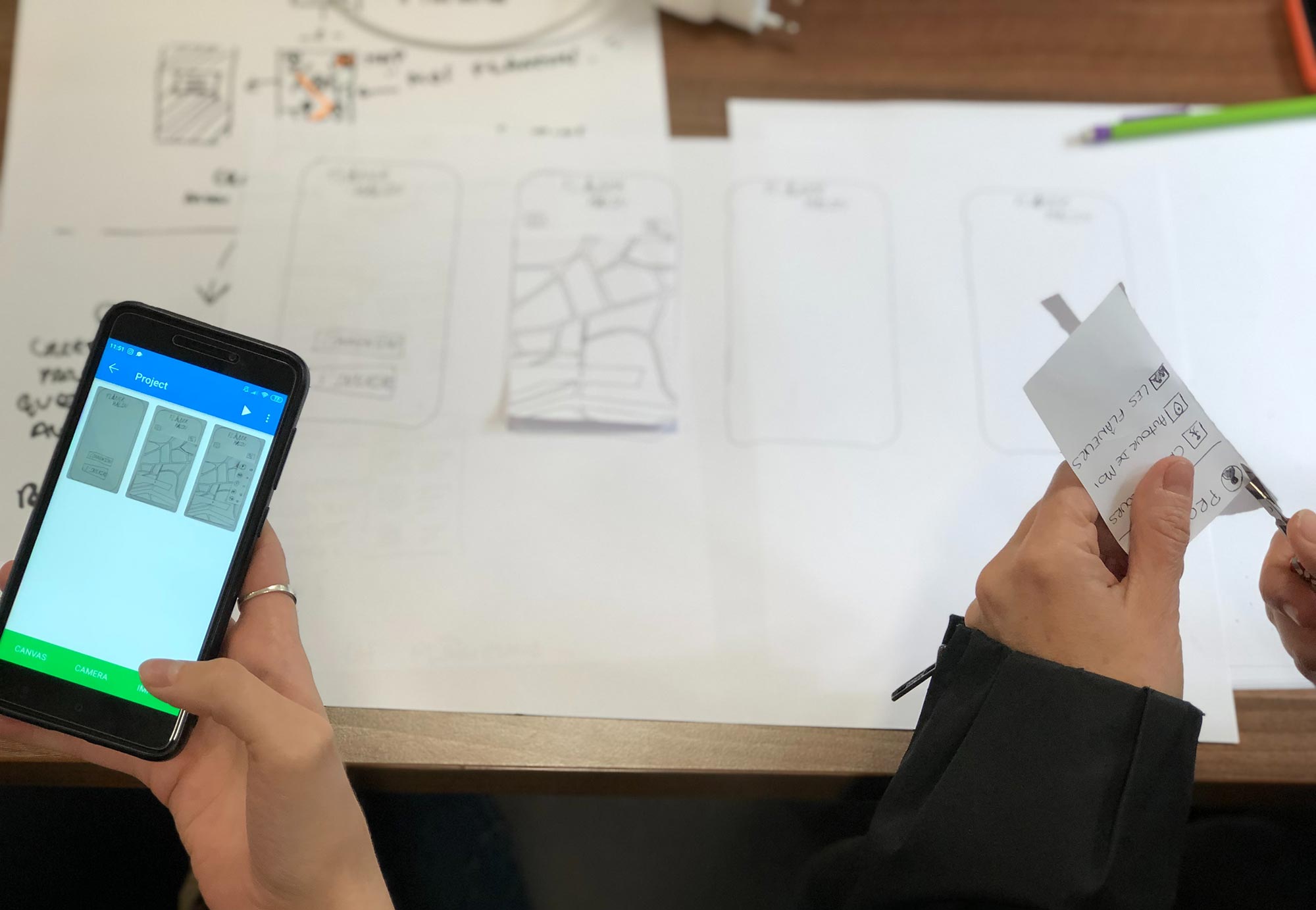

AndPlus understands digital transformation as the process of organizational change brought about by the use of digital technologies and business models to improve performance. Under this definition, digital transformation must include the following:

- Business objective; typically, a desire to “move the needle” on some key performance metric

- Foundation of one or more digital technologies

- Organizational change, which includes some combination of processes, people, and strategy

It’s a tall order, and not easy to pull off. Many organizations treat it as no more than a buzzword. “All the cool companies say they’re pursuing digital transformations, so we’ll make the same claim,” while being light on the specifics of what’s actually being transformed.

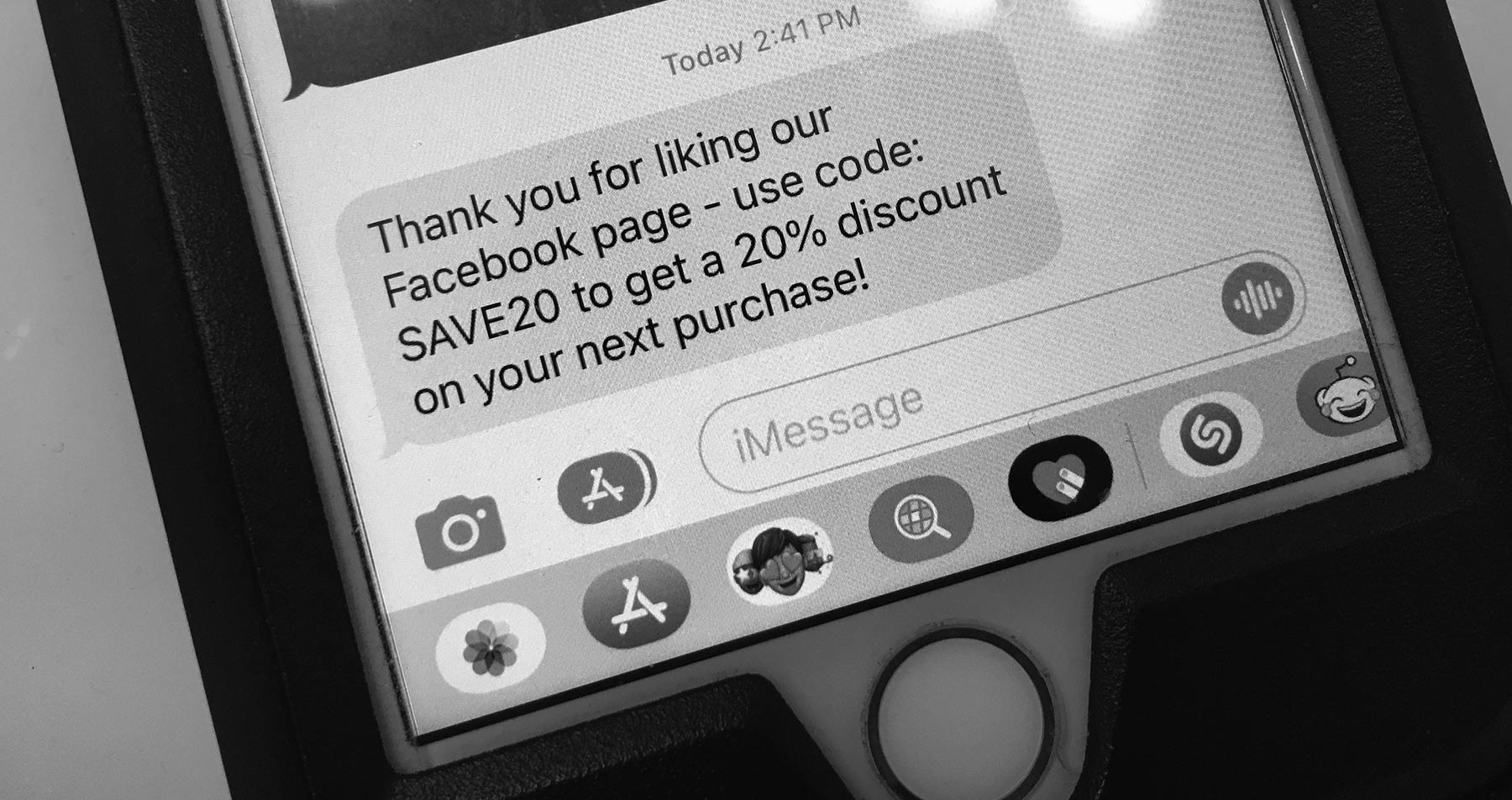

Dig a little deeper and you’ll find those businesses that successfully execute one or more digital transformations are better able to attract and keep happy customers. Their organizations reduce inefficiencies, eliminate cumbersome manual processes, and lower costs while readying for important market changes.

.jpg)

.jpg)

%20med.jpeg?width=599&quality=low)